Deep Learning: The Neural Networks Powering Modern AI

The Engine Behind the AI Revolution

Every time you use Google Photos to search for "cats," unlock your phone with facial recognition, or ask ChatGPT a question, you’re benefiting from deep learning. This technology—inspired by the structure of the human brain—has transformed artificial intelligence from a niche academic field into a worldwide phenomenon. But what exactly is deep learning, and how did it become the driving force behind today’s AI boom?

Deep learning is a subset of machine learning that uses artificial neural networks with many layers ("deep" architectures) to learn complex patterns from data. It’s the reason AI can now recognize objects in images, translate languages, generate human-like text, and even create art. While the concept dates back to the 1950s, deep learning only became practical in the 2010s thanks to three converging factors: big data, powerful computers (especially GPUs), and algorithmic breakthroughs.

In this article, we’ll explore how deep learning works, why it’s so powerful, and what makes it different from traditional AI approaches.

From Perceptrons to Deep Networks: A Brief History

The story of deep learning begins with the perceptron, invented in 1957 by Frank Rosenblatt. A perceptron is a simple computational unit that takes multiple inputs, applies weights, adds a bias, and produces an output through a nonlinear activation function. Multiple perceptrons can be organized into layers to form a neural network.

Early neural networks were shallow—typically one or two hidden layers. They could learn simple patterns but struggled with complex problems like image recognition or natural language understanding. Two factors limited them:

- Limited computing power: Training large networks was computationally expensive

- Lack of data: Neural networks need lots of examples to generalize well

The field shifted toward other machine learning approaches (support vector machines, decision trees) in the 1990s and early 2000s. But two key developments in the late 2000s reignited interest in neural networks:

2006: Geoffrey Hinton and colleagues introduced practical methods for training deep neural networks (better weight initialization, unsupervised pre-training). They coined the term "deep learning."

2012: AlexNet, a deep convolutional neural network developed by Hinton’s team, won the ImageNet image classification competition by a huge margin, reducing error from 26% to 15%. This was the moment deep learning proved it could solve problems that had resisted traditional AI.

Since then, deep learning has exploded in capability and application, achieving superhuman performance on many tasks.

How Deep Neural Networks Learn

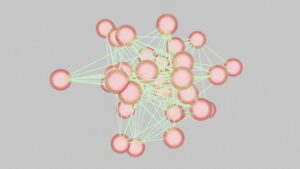

At its core, a deep neural network is a function that transforms input into output through a series of layers. Each layer consists of many neurons (units) that compute a weighted sum of their inputs, add a bias, and apply an activation function (ReLU, sigmoid, tanh).

A simple feedforward network:

- Input layer: receives raw data (e.g., pixel values)

- Hidden layers: progressively extract higher-level features

- Output layer: produces predictions (e.g., class probabilities)

The "deep" in deep learning refers to having many hidden layers (often 10, 50, 100, or even more). These multiple layers allow the network to learn hierarchical representations:

- Early layers detect simple features (edges, corners, textures in images; character n-grams in text)

- Middle layers combine simple features into parts (shapes, object parts; word meanings)

- Deeper layers combine parts into whole objects or high-level concepts (faces, sentences; semantic meanings)

Training process:

- Forward pass: Input flows through the network to produce a prediction

- Compute loss: Compare prediction to true label using a loss function (cross-entropy, MSE)

- Backward pass (backpropagation): Compute gradients of loss with respect to all weights

- Update weights: Use gradient descent (or variants like Adam) to adjust weights to reduce loss

- Repeat for many batches/epochs until convergence

The magic is that the network learns its own features automatically from data, rather than requiring human engineers to design features manually. Given enough labeled examples and compute, deep networks can discover incredibly complex mappings from inputs to outputs.

Key Architectures for Different Domains

Deep learning isn’t one-size-fits-all. Different tasks require specialized network architectures:

Convolutional Neural Networks (CNNs)

CNNs are designed for grid-like data, especially images. They use convolutional layers that slide filters across the input, detecting local patterns while being translation-invariant (the same feature can be detected anywhere in the image). Pooling layers reduce spatial dimensions.

Famous CNNs: LeNet-5 (1998), AlexNet (2012), VGGNet (2014), ResNet (2015), EfficientNet (2019)

Applications: image classification, object detection, semantic segmentation, face recognition, medical imaging.

Recurrent Neural Networks (RNNs) and LSTMs

RNNs process sequential data (text, time series, speech) by maintaining a hidden state that carries information from previous steps. However, basic RNNs suffer from vanishing gradients and struggle with long sequences. Long Short-Term Memory (LSTM) networks and Gated Recurrent Units (GRUs) introduced gating mechanisms to better capture long-range dependencies.

Applications: language modeling, machine translation, speech recognition, time series prediction.

Transformers

Introduced in 2017, transformers have largely replaced RNNs for sequence modeling. They use self-attention to weigh the importance of all positions in the sequence simultaneously, enabling parallel computation and better long-range understanding. Variants include BERT (encoder-only), GPT (decoder-only), and encoder-decoder models (T5, Transformer for translation).

Transformers power most modern large language models (GPT-4, Claude, Llama) and are also used in vision (Vision Transformers).

Generative Adversarial Networks (GANs)

GANs consist of two networks: a generator that creates fake data (images, audio) and a discriminator that tries to distinguish real from fake. They compete in a minimax game, resulting in highly realistic generated content.

Applications: image synthesis, style transfer, super-resolution, data augmentation.

Autoencoders

Autoencoders learn efficient data encoding by compressing input into a latent space and reconstructing it. Variants include Variational Autoencoders (VAEs) for generative modeling and denoising autoencoders for robust representations.

Graph Neural Networks (GNNs)

GNNs process graph-structured data (social networks, molecules, knowledge graphs) by propagating information along edges.

Applications: drug discovery, recommendation systems, social network analysis, fraud detection.

Why Deep Learning Works So Well

Deep learning’s success rests on several factors:

Representation Learning

Traditional machine learning required hand-crafted features—experts manually extracting relevant information from raw data. Deep learning automates this: given raw pixels or text characters, networks learn hierarchical feature representations that are optimized for the task. This automation allows application to new domains without extensive feature engineering.

Scale and Data

Deep learning models have millions or billions of parameters. More parameters mean more capacity to learn complex patterns. But these large models require massive datasets to avoid overfitting. The internet has provided enormous labeled datasets (ImageNet, Common Crawl) and unlabeled data for self-supervised learning.

Computation

Training deep networks is computationally intensive, requiring GPUs or specialized hardware (TPUs). Moore’s Law and the rise of general-purpose GPU computing made training feasible. Distributed training allows even larger models.

Architectural Innovations

Key architectural advances made training deeper networks possible:

- ReLU activation: Avoids vanishing gradients compared to sigmoid/tanh

- Batch normalization: Stabilizes training by normalizing layer inputs

- Residual connections (ResNet): Allows gradients to flow through many layers

- Attention mechanisms: Focus on relevant parts of input

- Layer normalization, weight initialization tricks: Further stabilize training

The Limits and Challenges of Deep Learning

Despite its successes, deep learning has significant limitations:

Data Hunger

Deep networks require vast amounts of labeled data for supervised learning. Acquiring labels is expensive and time-consuming. For many domains (medical imaging, specialized tasks), labeled data is scarce. Solutions include transfer learning, self-supervised pretraining, data augmentation, and synthetic data generation.

Compute Cost

Training state-of-the-art models consumes megawatt-hours of electricity and costs millions of dollars in cloud compute. This raises environmental concerns and limits accessibility to well-funded organizations. Inference (using trained models) can also be expensive at scale.

Lack of True Understanding

Deep learning models are essentially sophisticated pattern matchers. They don’t understand meaning, causality, or physics the way humans do. They can make surprising errors on out-of-distribution examples—inputs that differ from training data. A model trained on daytime driving might fail at night; a language model might generate plausible but false statements (hallucinations).

Black Box Nature

Neural networks are notoriously difficult to interpret. We can see inputs and outputs, but understanding why a network made a particular decision is challenging. This lack of explainability is problematic for high-stakes applications (medical diagnosis, criminal justice) where accountability matters.

Catastrophic Forgetting

Neural networks trained on one set of tasks may forget those tasks when trained on new ones. This is different from humans, who can accumulate knowledge incrementally. Continual learning research aims to overcome this.

Bias and Fairness

Deep learning models absorb biases present in training data. If your dataset has more images of white men than Black women, the model will likely underperform for the latter. Biases in language data propagate to LLMs, producing stereotypical or harmful outputs. Detecting and mitigating bias is an active area of research.

Adversarial Examples

Tiny, carefully crafted perturbations to inputs can cause deep networks to misclassify them with high confidence. These adversarial examples reveal that models learn decision boundaries that differ from human perception. Adversarial training improves robustness but isn’t perfect.

Energy Efficiency

The human brain operates on ~20 watts. Training a large deep learning model can consume as much energy as a small town uses in a year. Researchers are exploring more efficient architectures, neuromorphic computing, and model compression to reduce environmental impact.

Deep Learning in Practice: Tools and Frameworks

The deep learning ecosystem is rich with open-source tools:

Frameworks: PyTorch (Meta), TensorFlow (Google), JAX (Google), Keras (high-level API)

Libraries: Hugging Face Transformers, 🤗 Diffusers for generative models, Timm for vision, Fairseq for NLP

Model zoos: pretrained models available for download (ImageNet-trained CNNs, BERT, GPT-2, Stable Diffusion)

Platforms: Colab, Kaggle, Paperspace for experimentation; cloud services (AWS SageMaker, Google Vertex AI, Azure ML) for production

Hardware: GPUs (NVIDIA CUDA), TPUs (Google), specialized accelerators (Groq, Cerebras)

Typical workflow: start with a pretrained model, adapt it to your task via fine-tuning or transfer learning, deploy as an API or on edge devices.

Getting Started with Deep Learning

If you’re new to deep learning, here’s a recommended path:

- Prerequisites: Learn Python, linear algebra, calculus, probability/statistics

- Online courses: Andrew Ng’s Deep Learning Specialization on Coursera; fast.ai course; CS231n (vision) or CS224n (NLP) from Stanford

- Hands-on: Implement a simple neural network from scratch (numpy) to understand fundamentals

- Use frameworks: Learn PyTorch or TensorFlow. Train a CNN on CIFAR-10, an RNN for text generation

- Experiment with pretrained models: Use Hugging Face to fine-tune BERT, GPT-2, or Stable Diffusion

- Build projects: Choose an application that interests you (image classifier, chatbot, recommendation system)

- Read papers: Start with classics (AlexNet, ResNet, Attention, Transformer, GPT) and follow arXiv

- Join community: Reddit (r/MachineLearning, r/deeplearning), Discord servers, conferences (NeurIPS, ICML)

Deep Learning vs. Traditional Machine Learning

It’s worth contrasting deep learning with other ML approaches:

| Aspect | Traditional ML | Deep Learning |

|---|---|---|

| Feature engineering | Manual, domain expertise required | Automatic, learned from data |

| Data needs | Works with smaller datasets | Needs large labeled datasets |

| Compute | Light to moderate | Heavy (GPUs/TPUs) |

| Interpretability | Often more interpretable (decision trees, linear models) | Black box, though interpretability methods exist (SHAP, LIME, saliency maps) |

| Performance on complex data | Struggles with raw unstructured data (pixels, text) | Excellent with raw data |

| Transfer learning | Limited | Very effective (pretrained models fine-tuned to new tasks) |

In practice, choose based on your problem, data, and constraints. Simpler models are still valuable when data is small, interpretability is critical, or compute is limited.

The Future of Deep Learning

Deep learning is evolving rapidly. Key trends:

Larger and More Efficient Models

Scale continues: GPT-4, Claude, Gemini have hundreds of billions of parameters. But efficiency is also improving: sparse models, mixture-of-experts, quantization, distillation. The future is both bigger and smaller—capable foundation models plus efficient deployment versions.

Multimodal Learning

Models that process and generate multiple modalities (text, image, audio, video) are the frontier. GPT-4V, Gemini, and Claude demonstrate capabilities across vision and language. Future AI will seamlessly integrate senses, like humans.

Self-Supervised and Unsupervised Learning

Reducing reliance on labeled data is crucial. Self-supervised pretraining (predicting missing parts, masked modeling) has been hugely successful in language (BERT) and vision (MAE, DINO). Future models may learn from raw, unlabeled data like humans learn from observation.

Causal Reasoning and World Models

Current deep learning excels at correlation, not causation. Representing and reasoning about cause-effect relationships is a major challenge. World models—internal representations of how the world evolves—are key for planning, counterfactuals, and robust decision-making.

Explainability and Interpretability

As AI systems become more prevalent in critical domains, the need to understand their decisions grows. Research in interpretable AI aims to make neural networks more transparent without sacrificing performance.

Neuromorphic Computing and Alternatives

Mimicking the brain’s efficiency might require moving beyond conventional von Neumann architectures. Neuromorphic chips, memristors, and other hardware could enable more brain-like, energy-efficient computation. Alternative paradigms (spiking neural networks, liquid state machines) may complement deep learning.

Democratization and Accessibility

Tools like Hugging Face, AutoML, and no-code platforms are making deep learning accessible to non-experts. The future may see AI development as widespread as web development today.

Conclusion: Deep Learning is Just Getting Started

Deep learning has already transformed the world—from how we interact with our phones to how scientists discover new drugs. But we’re still in the early days. The technology has limitations, and there’s plenty of low-hanging fruit to improve efficiency, robustness, and understanding.

What makes deep learning special is its generality. The same core principles—stacking layers, gradient-based optimization, representation learning—apply across vision, language, audio, robotics, and beyond. It’s a universal tool for learning from data.

As deep learning continues to advance, we can expect:

- More capable and reliable AI assistants

- Breakthroughs in scientific discovery (biology, materials, physics)

- Personalized education and healthcare

- Autonomous systems that operate safely in the real world

- Creative tools that augment human imagination

The next time you see an AI do something remarkable—beat a chess champion, write a poem, diagnose a disease—remember that beneath the surface, it’s likely a deep neural network, trained on massive data, discovering patterns at scales we’re only beginning to comprehend. Deep learning isn’t magic—it’s math, compute, and data, orchestrated in a way that’s finally unlocking AI’s potential.

The journey from perceptrons to transformers has been remarkable. Where deep learning takes us next is anyone’s guess, but one thing is certain: the revolution is far from over.

Categories: Industry Trends

Tags: deep learning, neural networks, CNNs, RNNs, transformers, machine learning, AI, artificial intelligence, technology

No comment yet, add your voice below!