Reinforcement Learning: How AI Learns Through Trial and Error

The Learning Strategy of Babies, Animals, and Machines

Watch a baby learn to walk. They don’t read a manual. They don’t watch a lecture. They get up, fall down, get up again, adjust their balance, and gradually master the skill through repeated attempts. A kitten learns to hunt by pouncing, missing, and trying again. This trial-and-error learning is fundamental to how living beings acquire new skills.

Now imagine if machines could learn the same way—not from labeled datasets or explicit programming, but by interacting with an environment, trying actions, and learning from the consequences. That’s reinforcement learning (RL), one of the most exciting and powerful paradigms in artificial intelligence.

Reinforcement learning is the technology behind AlphaGo’s historic victory over world champion Lee Sedol, OpenAI’s Dota 2 champion bot, robots that learn to walk, and self-driving cars that improve through practice. It’s how AI can master complex tasks where the rules aren’t fully known and success requires a sequence of decisions.

In this article, we’ll explore how reinforcement learning works, why it’s different from other AI approaches, and what it can achieve.

The Reinforcement Learning Framework

At its core, reinforcement learning is about learning what to do to maximize a reward signal. The setup involves:

- Agent: The learner or decision-maker (the AI)

- Environment: The world the agent interacts with (a game, a robot’s surroundings, a financial market)

- State: A representation of the current situation (the chess board positions, the robot’s posture, the market price)

- Action: What the agent can do (move a piece, take a step, buy or sell)

- Reward: Immediate feedback from the environment (winning a game +1, losing -1, or a more nuanced score)

- Policy: The agent’s strategy for choosing actions given states

The agent’s goal: find a policy that maximizes cumulative reward over time (not just immediate reward, but long-term payoff).

This is called sequential decision-making—each action affects future states and rewards. It’s fundamentally different from supervised learning (where you have labeled examples) and unsupervised learning (finding patterns in unlabeled data). In RL, the agent must explore, experiment, and learn from experience, much like an animal or human.

Key Concepts That Make RL Work

Exploration vs Exploitation

The agent faces a fundamental dilemma: should it exploit what it already knows (choose the action with highest estimated reward) or explore new actions to discover potentially better strategies? Too much exploration leads to inefficient performance; too much exploitation might miss better solutions.

Think of choosing restaurants: you could always go to your favorite (exploitation) or try new places (exploration). The best long-term strategy balances both. RL agents use techniques like ε-greedy (random exploration with probability ε), Thompson sampling, or optimism under uncertainty to manage this trade-off.

Discounting Future Rewards

A reward received now is more valuable than the same reward received later (due to uncertainty and opportunity cost). RL uses a discount factor γ (between 0 and 1) to weight future rewards less. The agent learns to value actions that lead to earlier rewards more highly.

Value Functions and Q-Functions

The agent needs to estimate how good it is to be in a particular state (value function) or how good it is to take a particular action in a particular state (Q-function). These estimates guide decision-making. The challenge: learning these estimates from experience without environmental model.

Major Reinforcement Learning Algorithms

Q-Learning

One of the simplest and most famous RL algorithms, Q-learning learns a Q-function that estimates the expected cumulative reward for each state-action pair. It updates these estimates using the Bellman equation:

Q(s,a) ← Q(s,a) + α [r + γ max_a’ Q(s’,a’) – Q(s,a)]

where α is the learning rate. Q-learning is model-free (doesn’t need to know environment dynamics) and off-policy (can learn from past experiences). It works well for small, discrete problems but struggles with large state spaces (like images).

Deep Q-Networks (DQN)

DeepMind’s breakthrough came when they combined Q-learning with deep neural networks. Instead of a table, DQN uses a neural network to approximate the Q-function, taking raw pixel input and outputting Q-values for each action.

Key innovations made DQN stable:

- Experience replay: Store transitions in a replay buffer and sample randomly to break correlations

- Target networks: Use a separate, slowly updated network for target Q-values to stabilize learning

DQN learned to play Atari games from pixels, achieving human-level performance across many games. This was a landmark: raw pixels → actions via trial and error.

Policy Gradients

Instead of learning a value function, policy gradient methods directly optimize the policy (mapping states to actions) by gradient ascent on expected reward.

- REINFORCE: Monte Carlo method that updates policy based on complete episode returns

- Actor-Critic: Combines policy gradient (actor) with value function (critic) for better sample efficiency

- A3C, PPO: More advanced algorithms that stabilize training and handle continuous action spaces

Policy gradients excel at tasks with stochastic policies and continuous action spaces (like controlling robotic joints).

Model-Based RL

Instead of learning a policy or value function directly, model-based RL first learns a model of the environment (transition probabilities and rewards). Then it uses planning (e.g., Monte Carlo Tree Search) to decide actions based on the model.

Advantages: more data-efficient (model can be reused), can reason about consequences

Disadvantages: model errors compound, planning is computationally expensive

AlphaGo combined model-free policy networks with model-based MCTS to defeat world champions.

Multi-Armed Bandits

The simplest RL problem: choose among several one-armed bandits (actions) with unknown reward distributions. This explores the exploration-exploitation trade-off in its purest form. Solutions: ε-greedy, UCB, Thompson sampling.

Bandit algorithms are used in A/B testing, recommendation systems, and clinical trials.

Incredible Achievements of Reinforcement Learning

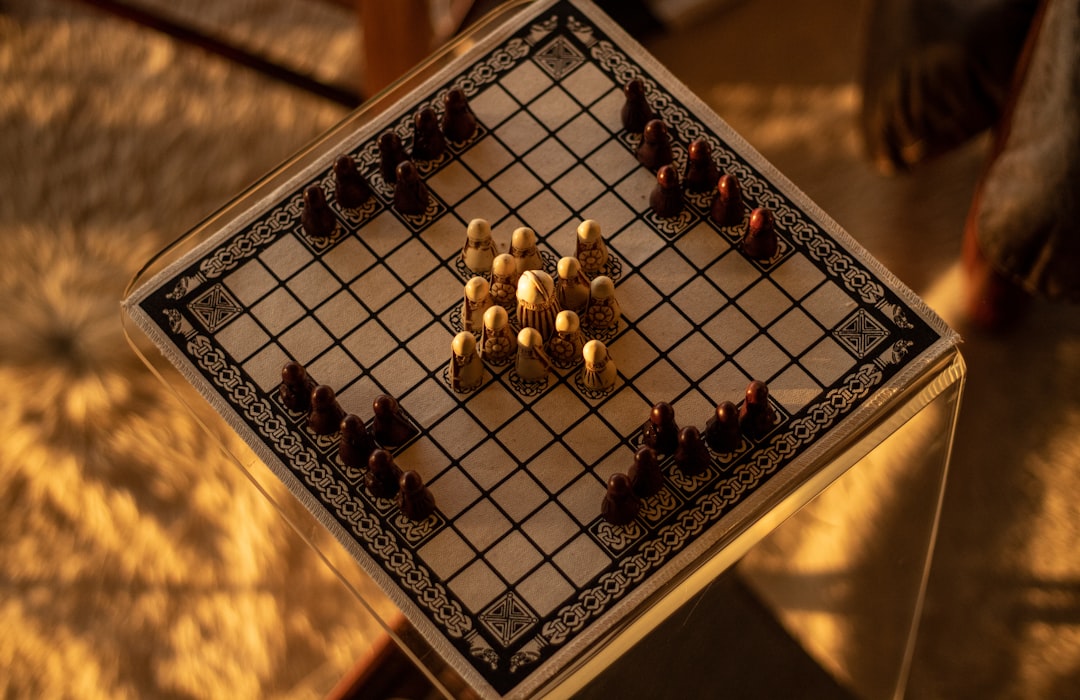

Games

Reinforcement learning has conquered many games, often in ways that revealed new strategies:

- Backgammon: TD-Gammon (1992) reached superhuman level

- Checkers: Chinook (1994) became world champion

- Go: AlphaGo (2016) defeated Lee Sedol, then AlphaGo Zero learned from scratch without human data

- Poker: Libratus (2017) beat top pros in no-limit Texas Hold’em, handling imperfect information

- Dota 2: OpenAI Five (2018) beat world champion team

- StarCraft II: DeepMind’s AlphaStar (2019) defeated professional players

- Chess: AlphaZero (2017) learned chess from scratch in hours, surpassing Stockfish

- Atari: DQN and successors beat human experts on many games from pixels only

These achievements demonstrate RL’s ability to master complex, strategic decision-making under uncertainty.

Robotics

RL enables robots to learn motor skills:

- Walking, running, hopping (Boston Dynamics uses RL components)

- Manipulation: grasping objects, opening doors, using tools

- Flying drones with agile maneuvers

- Robotic assembly and packaging

Challenges: real-world robots are slow and expensive; simulation-to-real transfer is key.

Autonomous Vehicles

Self-driving cars use RL for high-level decision-making:

- Lane changing

- Merging onto highways

- Negotiating intersections

- Emergency maneuvers

Robustness and safety are critical; pure RL is too risky for deployment, but RL components within safety frameworks show promise.

Resource Management and Operations

RL optimizes complex systems:

- Data center cooling (Google DeepMind reduced energy by 40%)

- Inventory management

- Supply chain optimization

- Power grid control

- Traffic light timing

These are classic sequential decision problems with long-term trade-offs.

Finance and Trading

RL agents learn trading strategies, portfolio allocation, and market-making. Challenges: non-stationarity, high noise, risk management. Some hedge funds explore RL, but results are mixed.

Healthcare

RL for personalized treatment plans, adaptive clinical trials, and robotic surgery assistance. Still early due to safety constraints and data limitations.

Recommender Systems

YouTube, Netflix, and others use RL to optimize long-term user engagement, not just immediate clicks. The agent recommends items, observes user responses (watch time, ratings), and learns which sequences keep users engaged longer.

Challenges and Limitations

Sample Efficiency

RL typically requires massive amounts of interaction data—millions of game steps, thousands of robot trials. This is impractical for real-world systems where each interaction is slow or costly. Improving sample efficiency is a major research focus: model-based RL, offline RL (learning from existing data), and imitation learning (learning from expert demonstrations).

Exploration in Large Spaces

Finding good policies in huge state-action spaces is hard. Random exploration won’t work for complex tasks. Better exploration strategies (count-based bonuses, curiosity-driven exploration, directed exploration) are needed.

Sparse and Delayed Rewards

Many tasks have rare or delayed rewards (you only win at the end of a long game). The credit assignment problem—figuring out which earlier actions contributed to the final outcome—is tough. Techniques like reward shaping, hindsight experience replay, and temporal abstraction help.

Stability and Convergence

RL training can be unstable, sensitive to hyperparameters, and prone to divergence. Algorithms like PPO were designed to be more stable. Theoretical guarantees are limited.

Safety and Reliability

RL policies can behave unpredictably in unseen situations. For safety-critical applications (autonomous vehicles, medical devices), this is unacceptable. Research in safe RL, constrained optimization, and verification seeks to address this.

Real-World Transfer

Policies learned in simulation may fail in the real world due to model discrepancies. Domain randomization, system identification, and adaptive methods help bridge the sim-real gap.

The Reinforcement Learning Toolbox

Key algorithms and frameworks:

Classic algorithms: Q-learning, SARSA, DQN, Policy Gradients, A2C/A3C, TRPO, PPO, DDPG, TD3, SAC

Advanced: AlphaZero (MCTS + neural networks), IMPALA (distributed RL), Dreamer (world models), MuZero (model-based without environmental model)

Libraries: Stable Baselines3, Ray RLlib, OpenAI Baselines, Dopamine, TF-Agents, Acme

Environments: OpenAI Gym/Gymnasium (classic control, Atari, MuJoCo), DeepMind Lab, Unity ML-Agents, MiniGrid, ProcGen, Real Robot Challenge

Getting Started with Reinforcement Learning

If you’re interested in RL, here’s a learning path:

- Foundations: Understand Markov Decision Processes (MDPs), Bellman equations, policy/value functions

- Start simple: Implement tabular Q-learning on CliffWalking or FrozenLake (small state spaces)

- Move to function approximation: Implement DQN on CartPole or Atari Pong

- Explore policy gradients: Implement REINFORCE or A2C on a continuous control task (Pendulum)

- Use modern libraries: Try Stable Baselines3 or RLlib on standard benchmarks

- Read papers: Classic papers (DQN, A3C, PPO, AlphaGo) and recent work

- Join the community: RL Discord, Reddit, arXiv, conferences (NeurIPS, ICML, ICLR)

Courses: David Silver’s RL course (UCL), Sergey Levine’s CS285 (Berkeley), Coursera specialization by Andrew Ng.

Reinforcement Learning vs. Other AI Paradigms

It’s helpful to contrast RL with other AI approaches:

Supervised Learning: Requires labeled datasets (input→output pairs). Learns to map inputs to outputs. Great for classification, regression. But relies on high-quality labels and doesn’t learn sequential decision-making.

Unsupervised Learning: Finds structure in unlabeled data (clustering, dimensionality reduction, generative models). No reward signal. Useful for representation learning, which can feed into RL.

Self-Supervised Learning: Learns representations by solving pretext tasks (predicting missing parts, context prediction). This is how large language models are pretrained. The representations can accelerate RL learning.

Imitation Learning: Learns from expert demonstrations (like supervised learning but with actions). Easier than RL but requires expert data and can’t exceed expert performance.

RL: Learns from trial and error with a reward signal. Can discover novel strategies beyond human expertise, but sample inefficient and unstable.

In practice, modern AI systems often combine these: pretrained representations (self-supervised) + RL fine-tuning (reinforcement), or imitation learning followed by RL improvement (DAgger, RL from human feedback).

The Future of Reinforcement Learning

Reinforcement learning is advancing rapidly. Key frontiers:

Offline RL

Learning from fixed, previously collected datasets without new environment interaction. This makes RL applicable to domains where online exploration is expensive or dangerous (healthcare, robotics, finance). Algorithms like Conservative Q-Learning (CQL) and Implicit Q-Learning (IQL) show promise.

Multi-Agent RL

Multiple agents learning together, cooperating or competing. Applications: multi-robot coordination, economic markets, multi-player games (Dota 2, StarCraft), social dilemmas. Challenges: non-stationarity, communication, emergent behaviors.

Hierarchical RL

Learning temporally extended skills (options, subroutines) that can be reused across tasks. This enables lifelong learning and transfer.

Meta-RL

Learning to learn—algorithms that adapt quickly to new tasks with minimal experience. This matches human ability to generalize from few examples.

RL for Science

Using RL to discover new scientific knowledge: controlling fusion reactors, optimizing molecular structures, designing new materials. DeepMind’s AlphaFold (though primarily supervised) and subsequent RL-based approaches for protein design.

Scalable and Generalist RL

Moving beyond narrow, tabula rasa learning to systems that can leverage prior knowledge and scale to many tasks. The goal: a general learning algorithm that can master any sequential decision problem given enough compute.

Safety and Alignment

Ensuring RL systems act safely and in accordance with human values. This includes robust decision-making under uncertainty, avoiding negative side effects, and aligning rewards with true human preferences (avoiding reward hacking).

Common Misconceptions About Reinforcement Learning

"RL is just trial and error": While trial and error is core, sophisticated RL involves function approximation, planning, hierarchical abstraction, and complex statistical learning. It’s not random guessing.

"RL needs millions of tries": Early RL was indeed sample inefficient, but modern algorithms, better architectures, and pretraining have improved efficiency dramatically. Some tasks can be learned in thousands or even hundreds of episodes.

"RL is only for games": Games are benchmark problems, but RL is applied to robotics, control systems, business decisions, and more. The principles generalize.

"RL will solve AGI": RL is a crucial piece of the AGI puzzle (agency, sequential decision-making), but alone it’s insufficient. Integration with perception, language, memory, and social intelligence is needed.

A Simple Example: Training a Robot to Walk

Let’s make it concrete: teaching a robot to walk using RL.

State: Joint angles, velocities, body orientation, foot contacts

Action: Torque commands to each motor

Reward: + reward for forward velocity, – penalty for energy use, falling, or excessive joint stress

The robot starts with random movements (exploration). It might fall immediately (negative reward). Over many trials, it learns that coordinated leg movements produce forward motion. It discovers gaits that balance speed and stability. Eventually, it can walk, run, navigate obstacles, and adapt to terrain changes—all without being explicitly programmed with a walking gait.

This trial-and-error learning is powerful because it doesn’t require human engineers to design complex control policies. The robot discovers what works through experience.

Conclusion: Learning by Doing

Reinforcement learning captures a fundamental principle: intelligent behavior emerges from interaction with an environment guided by feedback. It’s how nature built intelligence through evolution and development. Now we’re building machines that learn the same way.

The applications are vast—any domain requiring sequential decisions under uncertainty can benefit from RL. While challenges remain (sample efficiency, safety, real-world transfer), progress is rapid.

What makes RL truly special is its potential for continuous improvement. Unlike static AI models, RL agents can keep learning from new experiences, adapting to changing environments, and discovering novel strategies. This ongoing learning capability is essential for AI that operates in the open world.

As RL becomes more sample-efficient, safer, and easier to apply, we’ll see it embedded in more products and systems: smarter robots, more capable autonomous vehicles, personalized education tutors, adaptive medical devices, and AI assistants that learn from our interactions.

The next time you see a machine do something impressive—a robot cartwheeling, a drone performing a precision flip, an AI beating a grandmaster at chess—remember: it probably learned by getting it wrong, many times, before getting it right. That’s the essence of reinforcement learning. Trial, error, and eventual mastery. The machine’s version of falling down and getting back up.

Categories: Industry Trends

Tags: reinforcement learning, RL, AI, deep learning, Q-learning, DQN, policy gradients, robotics, autonomous systems, artificial intelligence, technology

No comment yet, add your voice below!